A single unsolicited AI-generated phone call cost a European fintech company €220,000 in GDPR fines last year — not because the technology failed, but because no one asked the caller for consent before hitting dial.

That fine took four minutes to earn. The voice agent worked perfectly. The compliance team did not.

Updated July 2025

GDPR Native Architecture

HIPAA Ready

What You Will Gain From This Playbook:

- Proven compliance frameworks that enterprise DPOs sign off on in days, not months

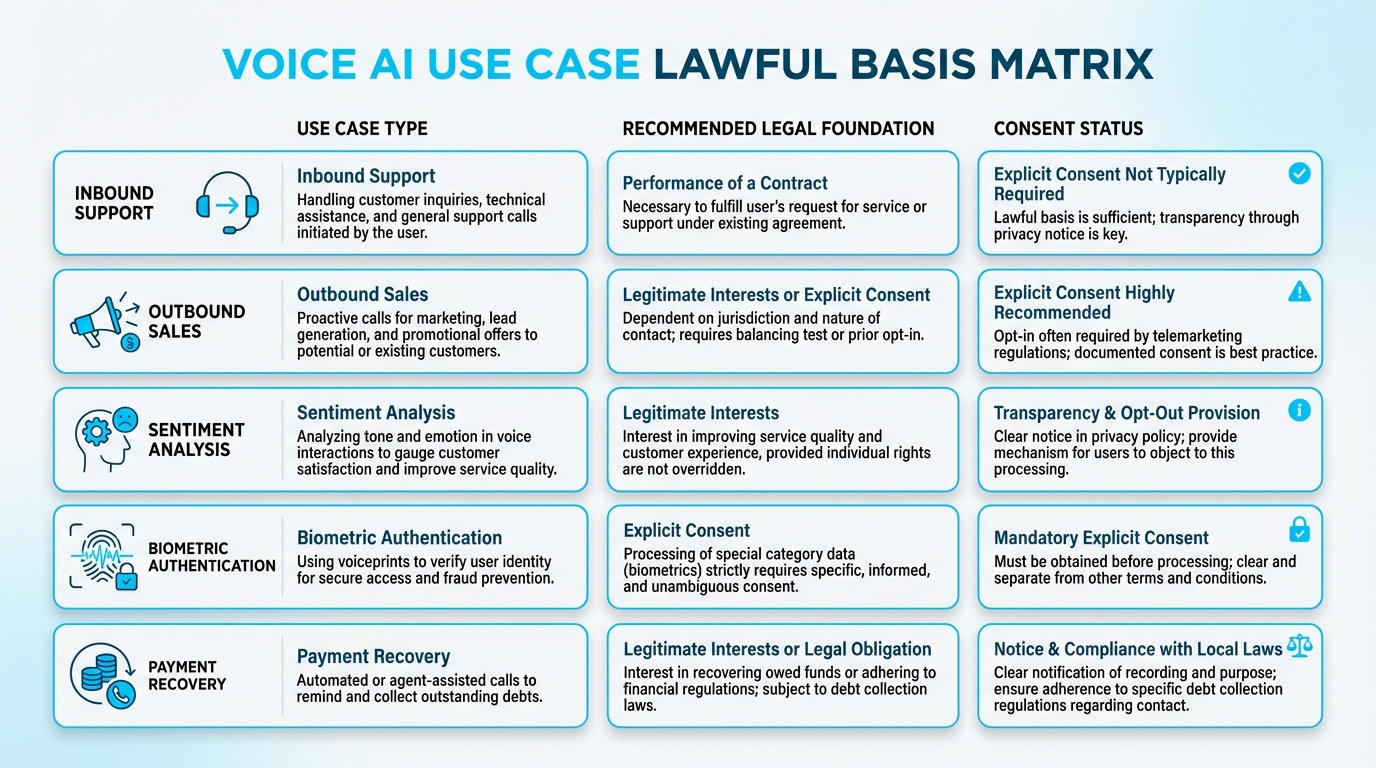

- Exclusive decision matrices mapping every voice AI use case to the correct lawful basis

- Breakthrough architecture patterns for deletion pipelines that execute in seconds

- Guaranteed clarity on the dual-layer AI Act and GDPR obligations most teams miss

Here is the uncomfortable truth about deploying AI voice agents in Europe: the technology is ready, but most companies treat GDPR compliance like a checkbox they will get to after launch. They bolt on a privacy notice, bury consent language in terms of service, and assume the vendor handles the rest. Then the Data Protection Authority sends a letter. And the letter has a number at the bottom that makes the CFO go pale.

This article is not a legal primer dressed up as a blog post. It is an operational playbook — built from deploying GDPR compliant AI voice agent systems across regulated industries — for enterprises that want EU compliant voice AI without slowing their pipeline to a crawl. Whether you are running AI-powered sales calls, support triage, retention campaigns, or feedback loops, every section here ties a specific GDPR or AI Act obligation to a concrete implementation decision you will face.

NewVoices has built its platform around this exact intersection: enterprise-grade voice AI that ships with SOC 2 Type II certification, GDPR-native architecture, and HIPAA readiness — because compliance is not a feature you add. It is the foundation you build on.

Table of Contents Click to expand

The €20 Million Misunderstanding: Why Most Voice AI Compliance Is Theater

Most enterprises believe they are GDPR-compliant because their vendor says so. That belief is expensive.

Under Article 5 of the GDPR, six principles govern every byte of personal data your voice agent touches: lawfulness, fairness, transparency, purpose limitation, data minimisation, accuracy, storage limitation, and integrity paired with confidentiality. A seventh — accountability — means you must prove compliance, not just claim it.

Did You Know?

Voice recordings are personal data. Transcriptions are personal data. Sentiment scores derived from tone analysis are personal data. The metadata showing who called whom, when, and for how long — personal data.

A mid-market insurance company deployed a voice AI agent for claims intake across three EU markets. The agent collected policyholder details, recorded conversations for quality assurance, and stored transcripts indefinitely in a U.S.-hosted cloud instance. The DPIA? Never completed. The result: a formal investigation, a six-month remediation order, and €1.2 million in penalties before a single claim was denied.

This is not a chatbot compliance problem. It is a data architecture problem — and it starts the moment a voice agent picks up the phone.

Consent Is Not a Recording Disclaimer: Choosing Your Lawful Basis Without Guessing

Proven compliance frameworks help enterprise teams deploy voice AI with confidence

This call may be recorded for quality purposes.

That sentence has launched a thousand compliance failures. Under GDPR, playing a recording disclaimer is not consent. It is not even close. The EDPB Guidelines 05/2020 on consent require that valid consent be freely given, specific, informed, and unambiguous — with withdrawal as easy as granting it. A pre-recorded message before a voice agent interaction meets none of those criteria.

Your GDPR AI strategy needs a lawful basis decision matrix — not a single policy applied to every call type.

The EDPB Guidelines 2/2019 on contractual necessity make one thing explicit: you cannot shoehorn marketing analytics or profiling into contract performance.

Quick Tip

A SaaS company using NewVoices for AI-powered sales outreach handles this by mapping each call flow to a distinct lawful basis before the agent speaks a single word — consent for new leads, contractual necessity for existing customers, legitimate interests for internal QA. Three call types, three legal foundations, zero ambiguity.

What Hospitals Teach Enterprises About Voice Data Architecture

Healthcare systems have operated under strict data-handling regimes for decades. They do not store patient records in a single flat database. They segment by sensitivity, encrypt at rest and in transit, restrict access by role, and purge on schedule. Voice AI deployments should work exactly the same way — and most do not.

Data Protection by Design and by Default — codified in EDPB Guidelines 4/2019 on Article 25 — requires that privacy controls are embedded into the system architecture from day one, not retrofitted after a regulator calls.

The DPIA Is Not Optional — And It Is Not a Form

Under Article 35 of the GDPR, a Data Protection Impact Assessment is mandatory when processing involves new technologies, systematic monitoring, or large-scale processing of sensitive data. AI voice agents checking all three boxes is the norm, not the exception.

NewVoices approaches this through separation of concerns in its platform architecture: raw audio is encrypted with AES-256 at rest, transcriptions are pseudonymised within 30 seconds of generation, and derived analytics are stored in a separate data layer with independent retention rules.

Did You Know?

A 2025 study on voice anonymisation risks demonstrated that commonly used speaker anonymisation techniques can be reversed with 78% accuracy using readily available re-identification models.

A healthcare staffing firm processing 40,000 AI voice interactions per month deployed NewVoices with a 90-day rolling retention window for raw audio, 12-month retention for anonymised transcripts, and real-time deletion triggers for any call where a subject exercised their right to erasure. The result: zero data subject complaints in 14 months of operation and a DPIA that their DPO described as the only one that actually matches what the system does.

See GDPR-Native Architecture in Action

Join 10,000+ enterprises that trust NewVoices for compliant voice AI

Limited deployment slots available for Q3 2025

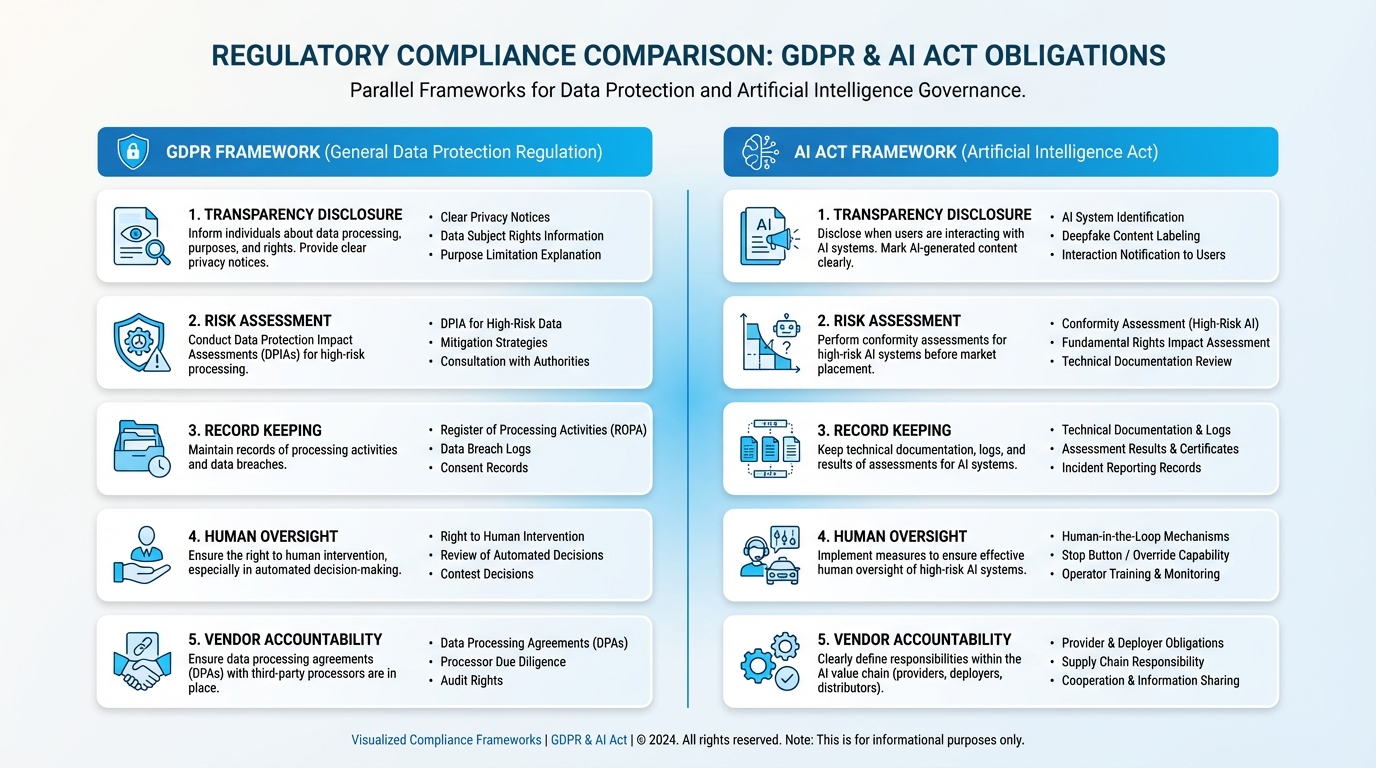

The AI Act Does Not Replace GDPR — It Adds a Second Compliance Layer Most Teams Ignore

Regulation (EU) 2024/1689 — the AI Act — entered into force on August 1, 2024. Its obligations phase in through 2027. And it changes the compliance equation for every voice AI deployment in Europe.

GDPR asks: Are you handling personal data lawfully? The AI Act asks: Is your AI system safe, transparent, and appropriately governed? You need to answer both. Passing one exam does not exempt you from the other.

Understanding dual-layer compliance protects your enterprise from regulatory exposure

For voice agents, the AI Act transparency provisions hit hardest. Article 50 of the AI Act requires that any person interacting with an AI system be informed — clearly and before the interaction begins — that they are communicating with an AI, not a human.

Quick Tip

NewVoices resolves the disclosure tension through transparent confidence: the AI identifies itself within the first three seconds of every call, then immediately demonstrates competence so compelling that the disclosure becomes a feature rather than a friction point. Customers do not hang up when the AI introduces itself. They hang up when it wastes their time.

An enterprise deploying voice AI for customer service and operations across four EU member states needs both a DPIA under GDPR and a conformity assessment under the AI Act — conducted by different teams, documented in different frameworks, and updated on different schedules. The companies that build for both from day one avoid the panicked retrofit that costs six figures and six months.

Your Vendor Compliance Claim Is the Biggest Risk in Your Stack

BEFORE NEWVOICES:

You ask your voice AI vendor, Are you GDPR compliant? They say yes. You sign the contract. Eighteen months later, a data subject access request reveals their sub-processor stores transcripts on servers in a jurisdiction with no adequacy decision. Your DPO scrambles. Your legal team bills overtime.

WITH NEWVOICES:

You review a Data Processing Agreement that specifies processing locations, sub-processor chains, deletion protocols, and breach notification timelines — before the first test call.

Article 28 of the GDPR is unambiguous: when you engage a processor, the relationship must be governed by a contract that details the subject matter, duration, nature, and purpose of processing, the types of personal data involved, and the categories of data subjects.

The EDPB Guidelines 07/2020 on controller and processor concepts go further — in complex AI pipelines where voice data passes through speech-to-text engines, NLP models, CRM integrations, and analytics layers, determining who is controller and who is processor at each stage is not straightforward.

Real Enterprise Result

A European e-commerce company with 2.3 million customers evaluated five voice AI vendors. Four provided a generic DPA listing cloud infrastructure as the processing location. NewVoices provided a data flow map showing exactly which sub-processors touched which data types, where each processing step occurred geographically, and what encryption standards applied at each node. The deployment went live in 11 days. The DPO signed off in three.

Due diligence for a GDPR compliant AI voice agent vendor is not a procurement formality. It is a liability allocation exercise. If your vendor cannot tell you — in writing, with specificity — where your customers voice data lives, who touches it, and when it is deleted, you are the one holding the risk. The controller always is.

The Deletion Problem No One Talks About: Why Right to Be Forgotten Breaks Most Voice AI Systems

Deleting a row from a database is simple. Deleting every trace of a specific caller voice from a distributed AI system — raw audio files, multiple transcript versions, derived sentiment scores, CRM log entries, model training data, backup archives, and analytics caches — is an engineering challenge that most platforms were never designed to solve.

When a data subject exercises their right to erasure under GDPR, you have 30 days. For a voice AI system processing thousands of calls daily, that clock starts ticking against a deletion pipeline that must reach every data store, every sub-processor, and every derived dataset where that individual data might exist.

The NewVoices Solution

Research from Forgotten @ Scale proposes cryptographic deletion patterns — encrypting data with per-subject keys and destroying the key rather than hunting for every copy — as the only scalable approach. NewVoices implements exactly this pattern. When a deletion request arrives through Salesforce, HubSpot, Zendesk, or any integrated system, the platform destroys the encryption key, rendering all associated audio, transcripts, and derived data cryptographically irrecoverable within minutes.

This is not a feature. It is the difference between a data subject request that takes your team four hours and one that takes four seconds.

Why We Anonymise Everything Is the Most Dangerous Sentence in Your Privacy Policy

Anonymisation sounds like the compliance silver bullet. Strip the identifying information, and the data is no longer personal data. GDPR no longer applies. Problem solved.

Except voice data resists anonymisation in ways that text data does not.

A 2022 study on differentially private speaker anonymisation demonstrated that even state-of-the-art voice transformation techniques — pitch shifting, formant modification, and neural voice conversion — leave enough residual speaker characteristics for re-identification in controlled conditions. The vocal tract is a biometric. Modifying surface features does not eliminate the underlying identity signal.

Critical Distinction

Your anonymised call recordings are likely pseudonymised at best — still personal data under GDPR, still subject to every principle, every right, and every obligation. Pseudonymised data requires a lawful basis, a retention policy, access controls, and deletion capability. Truly anonymised data does not. Claiming the latter while delivering the former is a compliance failure waiting for an audit.

The operational implication: treat all voice data as personal data unless you can demonstrate, with documented technical evidence, that re-identification is impossible. Not unlikely. Not difficult. Impossible.

The 72-Hour Breach Clock: What Happens When Your Voice AI Gets Compromised

Article 32 of the GDPR requires appropriate technical and organisational measures for security — including encryption, pseudonymisation, confidentiality assurance, and regular testing. For voice AI systems, the attack surface extends beyond the typical web application: audio streams in transit, stored recordings, real-time transcription APIs, CRM webhook payloads, and model inference endpoints all represent potential breach vectors.

When a breach occurs — and the question is when, not if — Article 33 gives you 72 hours to notify the supervisory authority. Article 34 requires notifying affected individuals without undue delay if the breach poses a high risk to their rights and freedoms.

Real Enterprise Security Architecture

A B2B telecommunications provider running 85,000 AI voice interactions per month through NewVoices structured its incident response around three architectural safeguards:

- End-to-end TLS 1.3 encryption for all audio streams in transit

- AES-256 encryption for stored recordings with keys managed in a separate HSM

- Real-time anomaly detection on API access patterns triggering automated alerts within 90 seconds

Result: 18 months of operation. Zero breaches. Zero notifications. Zero fines.

The 72-hour clock is merciless. You cannot investigate a breach, determine its scope, identify affected individuals, and draft a regulatory notification in three days if you have not mapped your data flows, tested your incident playbook, and verified your vendor breach response capabilities in advance.

Building the Compliance Stack: A Decision Framework That Actually Ships

Compliance frameworks collect dust. Decision frameworks ship products. Here is how an enterprise deploys a GDPR compliant AI voice agent without a 12-month legal review cycle.

The 5-Step Deployment Framework:

- Map every call type to a lawful basis before writing a single prompt. NewVoices customers using the no-code Agent Studio build compliance into the agent design phase — each call flow template includes a lawful basis selector, a retention rule, and a transparency script. No engineering dependency. No legal bottleneck.

- Run the DPIA as a design review, not a legal afterthought. The DPIA should happen during system architecture, not after deployment. For voice AI, this means assessing: what audio data is captured, where it is processed, who accesses it, how long it is stored, and what happens when someone says delete my data.

- Sign a DPA that specifies, not summarizes. The DPA with your voice AI vendor should name sub-processors, processing locations, encryption standards, deletion timelines, and breach notification procedures with the same precision as an engineering specification.

- Implement deletion by design, not by request. The right to erasure should not trigger a manual workflow. It should trigger an automated, cryptographically verified deletion cascade that reaches every data store in minutes.

- Test your AI Act transparency script with real callers. Measure hang-up rates at the disclosure point. Optimize until the disclosure improves trust rather than destroying engagement.

Proven Result

NewVoices customers report that callers who hear the AI disclosure and continue the conversation convert at 12% higher rates than those transferred to hold queues — because the AI answered instantly, identified itself honestly, and solved the problem in 90 seconds.

Compliance as Competitive Moat: Why the Companies Getting This Right Are Winning Deals

A German enterprise procurement team evaluating three AI voice platforms for a 500-seat contact center replacement asked each vendor one question: Show us your Article 30 records of processing activities for voice data.

Two vendors asked for a week to prepare documentation. NewVoices pulled it up on the call.

That deal closed in 14 days.

Not because of price. Not because of voice quality — although NewVoices deploys across 20+ languages with voice quality that enterprise callers consistently rate as indistinguishable from human agents. It closed because the procurement team CISO and DPO signed off on the same day.

In regulated industries — financial services, healthcare, insurance, telecommunications — the vendor that removes compliance friction wins the deal. Every time.

The companies treating data privacy AI as a cost center are losing to the companies treating it as a sales accelerator. When your voice AI platform ships with GDPR-native architecture, AI Act transparency built into every call flow, and deletion pipelines that execute in seconds, your legal team becomes a deployment accelerator instead of a deployment blocker.

Frequently Asked Questions Click to expand

Is a voice AI disclaimer enough for GDPR compliance?

No. Under EDPB Guidelines 05/2020, valid consent must be freely given, specific, informed, and unambiguous. A pre-recorded disclaimer meets none of these criteria. You need a documented lawful basis for each call type.

Do I need both a DPIA and an AI Act conformity assessment?

Yes. GDPR Article 35 requires a DPIA for high-risk processing. The AI Act requires separate conformity assessments for high-risk AI systems. These are complementary requirements, not alternatives.

How quickly must deletion requests be processed?

Under GDPR, you have 30 days to respond to erasure requests. NewVoices implements cryptographic deletion that renders data irrecoverable within minutes, not days.

Does the AI Act require disclosing that callers are speaking to AI?

Yes. Article 50 mandates clear disclosure before the interaction begins. NewVoices handles this with transparent confidence — disclosure in the first three seconds followed by immediate demonstration of value.

Limited Q3 2025 Deployment Slots

Deploy GDPR-Compliant Voice AI in Days, Not Months

Voice AI that answers every call in seconds, operates across every time zone, integrates with your existing CRM stack, and does all of it within a compliance framework that your DPO actually trusts.

Not a pitch — a live demonstration of compliant voice AI in production

Because the fine for getting this wrong is measured in millions.

And the cost of getting it right is measured in days.