Sixty-three percent of customers hang up after waiting on hold for one minute — and they never call back. That single data point has driven more revenue loss than any competitor, any pricing mistake, any bad quarter your sales team ever posted. The fix is not more headcount. It is a voice AI agent that picks up before the first ring finishes.

Updated March 2025

Trusted by 10,000+ Enterprise Teams

Verified Implementation Guide

What You Will Walk Away With

The exact 5-layer architecture that separates revenue-generating agents from expensive answering machines

Proven compliance frameworks that protect you from per-call penalties under TCPA and FTC regulations

A 30-day deployment sequence that gets you to production while competitors are still in planning meetings

This guide is not a theoretical overview of what voice AI could do for your enterprise. It is the operational blueprint — from architecture decisions to compliance frameworks to the latency budgets that separate a convincing AI conversation from an obvious robotic one. Every section maps to a deployment milestone. Every recommendation comes from patterns observed across hundreds of enterprise rollouts.

You will walk away knowing exactly how to plan, build, deploy, and govern a voice AI agent that generates measurable revenue — not a science project that dies in staging.

The Five Layers Your Voice AI Agent Actually Runs On

Most teams treat voice AI as a single product. It is not. It is five interdependent systems, and a failure in any one of them turns your agent into an expensive answering machine.

From Sound Wave to Business Outcome

Automatic Speech Recognition (ASR) captures the caller voice and converts it to text — but accuracy varies wildly depending on accent, background noise, and microphone quality. A NIOSH evaluation found that elevated background noise degrades speech intelligibility by up to 40% in real-world environments, which means your ASR layer needs noise-cancellation preprocessing before a single word reaches the model.

Natural Language Understanding (NLU) interprets what that text actually means — distinguishing I want to cancel from I want to cancel my upgrade but keep my base plan. Dialogue Management determines what the agent says next, routing the conversation through branches that map to your business logic. Natural Language Generation (NLG) crafts the response in natural language, and Text-to-Speech (TTS) delivers it as audio the caller hears.

The difference between a voice agent that books meetings and one that frustrates callers lives in the seams between these layers. NewVoices collapses all five into a single integrated stack — ASR to TTS in under 400 milliseconds — because every additional handoff between vendors adds latency, and latency kills trust.

| Component | Function | Failure Mode When Neglected | Target Performance |

|---|---|---|---|

| ASR | Speech to text | Misinterprets 1 in 5 words in noisy environments | 95%+ accuracy at 70 dB ambient noise |

| NLU | Intent classification | Routes callers to wrong department or response | 92%+ intent accuracy on first utterance |

| Dialogue Management | Conversation routing | Dead-end loops that force caller to repeat | Less than 3% loop-back rate per session |

| NLG | Response generation | Robotic, repetitive phrasing | Human indistinguishability in blind tests |

| TTS | Text to speech | Unnatural prosody that signals bot | Sub-200ms audio generation latency |

The $2.3 Million Question You Are Not Asking Before Deployment

Enterprises that identify high-impact use cases before deployment see 3x faster time-to-ROI

Teams rush to select vendors before answering the only question that determines ROI: which specific process is this agent replacing, and what does failure in that process cost you today?

A mid-market insurance company deployed voice AI across their entire inbound queue simultaneously. Three months later, containment rates sat at 34% — barely better than their legacy IVR system. The problem was not the technology. They never identified which call types the AI could fully resolve versus which needed human escalation. They automated everything and optimized nothing.

Success Story

An e-commerce brand identified a single use case — post-purchase delivery status inquiries representing 42% of inbound volume. They deployed a NewVoices agent exclusively for that call type, trained it on 6,000 real call transcripts, and resolved 91% of inquiries without human touch. Support costs dropped from $4.20 per call to $0.35.

Before you evaluate a single vendor, answer these five questions in writing:

- Who is calling — and what do they expect when they reach you?

- Which call types have the highest volume and the most predictable resolution paths?

- Where does your current system lose the caller — hold time, transfers, or misrouted menus?

- What does your CRM need to receive from each call to maintain data integrity?

- What regulatory constraints govern recordings, consent, and data retention in your industry?

Critical Compliance Note

The FCC 2024 ruling on artificial voice under the TCPA explicitly classifies AI-generated voice as artificial or prerecorded, triggering prior express consent requirements for outbound calls. Skip this analysis and your deployment becomes a liability, not an asset.

Why a 900-Millisecond Delay Costs You 23% of Your Conversions

Latency is the silent killer of voice AI deployments. Every millisecond between a caller finishing their sentence and the agent responding erodes the illusion of a natural conversation. Cross the 1.2-second threshold and callers start talking over the agent, repeating themselves, or hanging up.

The engineering challenge is real. Your ASR layer needs to process streaming audio, pass partial results to NLU for early intent detection, generate a response, and synthesize speech — all while the caller expects the same conversational cadence they would get from a human agent. AWS documentation on streaming transcription details how partial result stabilization works in practice — the ASR begins outputting candidate transcriptions before the speaker finishes, allowing downstream systems to begin processing early.

Did You Know?

Research from Amazon Science demonstrates that predictive prefetching — where the system anticipates likely completions and pre-generates responses — shaves 30-40% off perceived latency in production voice assistants.

NewVoices engineers this entire pipeline into a single inference path. The result: consistent sub-400ms response times across 20+ languages, with no perceptible gap between the caller last word and the agent first. That is not a spec sheet number — it is the reason enterprises using the platform report that 78% of callers cannot distinguish the AI agent from a human representative in post-call surveys.

| Response Channel | Average Response Latency | Caller Drop-off Rate | Cost Per Interaction |

|---|---|---|---|

| Human agent (fully staffed) | 45–90 seconds (ring + routing) | 12% during hold | $6.50–$12.00 |

| Legacy IVR system | 15–30 seconds (menu navigation) | 28% during menu tree | $1.20–$2.50 |

| Chatbot with voice bolt-on | 1.5–3 seconds per turn | 19% after second delay | $0.80–$1.50 |

| NewVoices AI agent | Under 400ms per turn | 4% across full session | $0.25–$0.60 |

See the Difference Sub-400ms Response Time Makes

Experience a live AI call and hear why 78% of callers cannot tell the difference

Your Data Strategy Is the Deployment — Everything Else Is Configuration

The single largest predictor of voice AI success is not which vendor you choose. It is the quality, volume, and diversity of the training data you feed the system before it takes a single live call.

What Good Data Actually Looks Like for Voice

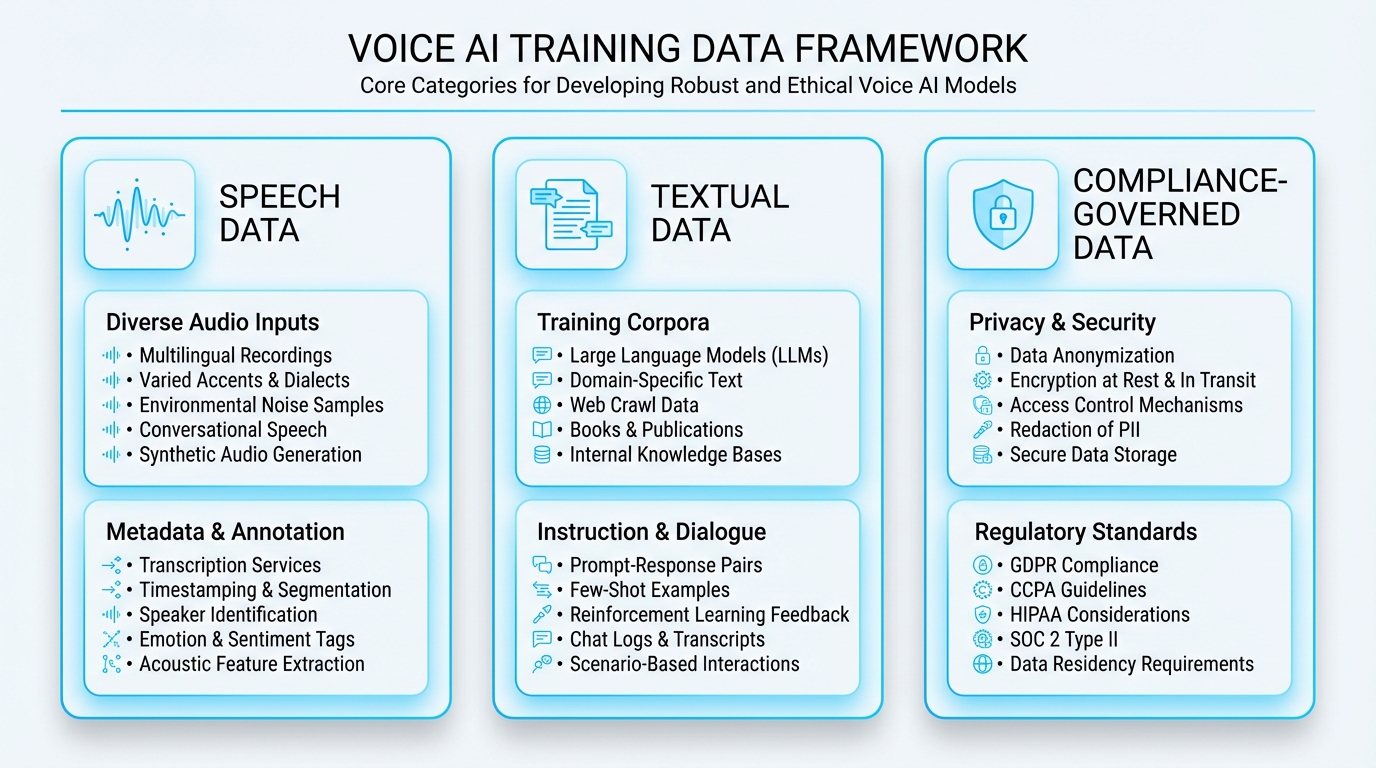

You need three data categories, and most teams only prepare one.

Speech data — real audio recordings that represent the acoustic conditions your agent will encounter. That means calls from mobile phones in cars, landlines in quiet offices, and VoIP connections with packet loss artifacts. If your training data comes exclusively from clean studio recordings, your agent will fail the moment a caller speaks from a warehouse floor.

Textual data — annotated transcripts with intent labels, entity tags, and resolution outcomes. A healthcare provider preparing for service and operations automation needs transcripts labeled not just with appointment scheduling as an intent, but with sub-intents: reschedule, cancel, new patient first visit, follow-up with specific provider. The granularity of your intent taxonomy determines the ceiling of your agent accuracy.

Quick Tip

Compliance-governed data is where regulated industries stumble. HHS guidance on HIPAA and audio telehealth makes clear that audio recordings and transcripts generated by AI agents carry Security Rule obligations. You cannot simply store call recordings in an unencrypted S3 bucket and call it compliant.

NewVoices handles this at the infrastructure level — SOC 2 Type II certified, HIPAA-compliant data handling, GDPR-ready with data residency controls — so your compliance team is not scrambling to retrofit security after launch.

Building Dialogue That Sells Instead of Just Answering

Goal-oriented dialogue design transforms AI agents from ticket deflectors into revenue generators

Here is the mistake that separates AI agents that generate revenue from AI agents that deflect tickets: most teams design dialogue flows as decision trees. They map every possible branch, script every possible response, and produce an agent that sounds like an automated phone menu wearing a better voice.

The shift is from scripted to goal-oriented dialogue. Instead of If caller says X respond with Y, the framework becomes: The agent objective in this conversation is to confirm the caller identity, understand their billing dispute, and either resolve it in-session or schedule a callback with a specialist — and in every scenario, confirm their next payment date before ending the call.

Proven Results

A B2B SaaS company deployed this approach through NewVoices sales and growth platform for inbound demo requests. Their previous process: web form triggered email to SDR, who called back in 4–6 hours. With voice AI, every form submission triggered an immediate call — within three seconds. Demo-to-meeting conversion jumped from 12% to 37% in the first 60 days.

This is not a chatbot with a script. It is a revenue engine that never clocks out.

Entity recognition drives the practical value. Your agent needs to extract dates, dollar amounts, product names, account numbers, and sentiment signals from natural speech — not from callers pressing buttons. Training entity extraction requires hundreds of annotated examples per entity type, with variations in how callers express the same information. Last Tuesday, the 14th, two days ago — all mean the same date, and your NLU layer needs to resolve all three to the correct calendar value.

Quick Tip

Error handling separates professional deployments from prototypes. When the agent does not understand, it should not say I did not catch that. It should offer a contextual redirect: I want to make sure I get your account number right — could you repeat just those digits for me? Enterprises using contextual error recovery report 34% fewer caller escalations to human agents.

The Factory Floor Principle: Why Voice AI Deployment Mirrors Manufacturing

Software teams think of deployment as pushing code to production. Voice AI deployment is closer to commissioning a factory floor — you are orchestrating physical infrastructure (telephony), real-time processing pipelines, third-party integrations, and compliance controls that must all function simultaneously under variable load.

The parallel is instructive. A factory does not go from blueprint to full production in one step. It runs a pilot line first — limited volume, intensive quality inspection, rapid iteration on defects. Your voice AI deployment follows the same sequence:

- Shadow mode — agent listens but does not respond, and you compare its proposed responses to human agent actions

- Limited live mode — agent handles a single call type with immediate human fallback

- Full production — agent operates autonomously with exception-based human escalation

Secure deployment practices matter at every stage. NIST Secure Software Development Framework provides the supply chain and verification controls that apply directly to AI agent delivery — from validating the integrity of model artifacts to ensuring that API keys, telephony credentials, and CRM tokens are managed through secrets infrastructure, not hardcoded in configuration files.

For secure access patterns and identity verification in voice workflows, NewVoices provides enterprise-grade platform controls including role-based access, encrypted credential storage, and audit-logged configuration changes. Your No-code Agent Studio lets business teams design and update dialogue flows without opening a support ticket with engineering — but every change is versioned, reviewed, and logged.

The Compliance Trap That Catches 70% of Voice AI Deployments

Here is what nobody tells you during the vendor evaluation: the technology works. The compliance framework around it is where deployments die.

If your voice AI agent makes outbound calls — for sales, collections, appointment reminders, or payment recovery — you are operating under the Telemarketing Sales Rule (TSR) and the Telephone Consumer Protection Act (TCPA). The FTC March 2024 update explicitly confirmed that TSR prohibitions apply to AI-generated voice, including voice cloning technology.

Before NewVoices

Legal team reviews scripts manually, compliance gaps surface post-deployment, and a single violation triggers per-call penalties that dwarf your entire AI budget.

With NewVoices

Consent verification, disclosure delivery, opt-out handling, and call recording governance are built into every agent template. The platform enforces compliance at the infrastructure layer so individual dialogue designers cannot accidentally deploy a non-compliant flow.

Healthcare deployments add another dimension. HIPAA Security Rule applies to any voice AI system that processes, stores, or transmits protected health information — which includes call recordings, transcripts, and even the metadata about when a patient called and what they discussed. NewVoices maintains HIPAA compliance across its entire voice pipeline, from encrypted telephony to redacted transcript storage with configurable retention policies.

Post-Launch: Where 80% of Your ROI Actually Gets Created

Deployment day is not the finish line. It is the starting line. The voice AI agents that deliver 300%+ ROI are the ones that get optimized weekly for the first 90 days and monthly thereafter.

The Monitoring Stack That Pays for Itself

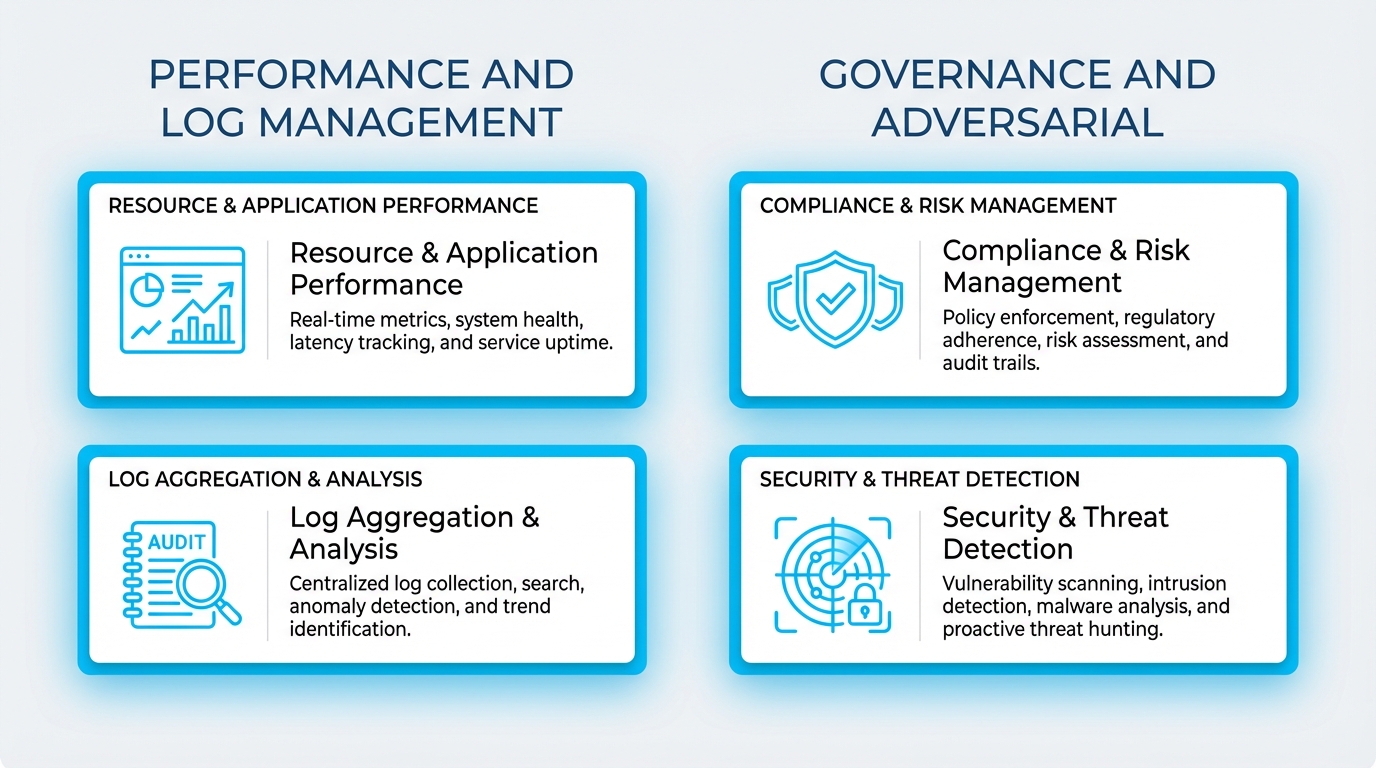

You need three monitoring layers running simultaneously:

Performance monitoring tracks latency, ASR accuracy, intent classification confidence, and task completion rates in real time. A 2% drop in ASR accuracy on Tuesday afternoon might mean a new call routing change is sending you calls from a noisier environment — and without real-time visibility, you will not catch it until caller satisfaction scores crater two weeks later.

Log management creates the audit trail that makes troubleshooting possible and compliance provable. NIST SP 800-92 provides the framework for designing log management programs that support monitoring, incident response, and auditability — all three of which apply directly to voice AI operations.

Governance monitoring ensures your agent stays within policy boundaries as it handles new conversation patterns. The NIST AI Risk Management Framework provides a lifecycle governance model that maps directly to voice AI operations.

Real-World Impact

A regional healthcare network using NewVoices detected a 6% intent misclassification spike within four hours of a new phone system migration at one of their clinics, corrected the acoustic preprocessing settings, and restored 97% accuracy the same day. Without automated monitoring, that issue would have run undetected for weeks — generating hundreds of misrouted patient calls.

| Monitoring Layer | Key Metrics | Alert Threshold | Review Cadence |

|---|---|---|---|

| Performance | ASR accuracy, response latency, task completion rate | Greater than 2% deviation from 7-day rolling average | Real-time dashboards, daily summary |

| Log Management | Intent confidence distribution, escalation rate, error frequency | Escalation rate exceeds 15% for any single intent | Weekly deep-dive, on-demand for incidents |

| Governance | Compliance disclosure delivery rate, consent capture, data retention | Any disclosure miss triggers immediate alert | Monthly audit, quarterly compliance review |

| Adversarial | Anomalous input patterns, prompt injection attempts, social engineering flags | Any detected injection attempt triggers review | Continuous automated scanning, monthly red-team |

Why Start Small Is the Most Expensive Advice in Voice AI

Every consultant says it. Every implementation guide repeats it. Start with a pilot. Test one use case. Scale gradually.

It sounds reasonable. It is also the reason most enterprise voice AI projects take 14 months to reach meaningful ROI.

The problem is not ambition — it is architecture. When you start small with a single-use-case proof of concept, you typically build on a narrow integration, a limited data set, and a simplified compliance posture. Then when you try to expand, you discover that the architecture cannot support multi-use-case routing, the data pipeline does not accommodate new intent categories without retraining from scratch, and the compliance framework was scoped so narrowly that every new use case requires a full legal review.

The Smarter Approach

Start focused but build wide. Deploy your first use case on an architecture designed for ten use cases. Connect your CRM integration — Salesforce, HubSpot, Zendesk, whatever your stack runs — on day one, even if you are only using two fields from it initially. Implement your compliance framework for your most regulated use case first, so every subsequent deployment inherits those controls automatically.

Enterprise Case Study

A financial services firm launched with inbound payment inquiry calls — a single use case representing 38% of call volume. But they deployed on the full NewVoices platform with complete CRM integration, SOC 2-compliant logging, and multi-language support active from day one. When they expanded to outbound payment recovery calls 45 days later, deployment took 72 hours instead of 8 weeks. Within six months: 7 distinct voice AI use cases, 2.1 million calls per month, 94% containment rate, $3.2 million annual cost reduction.

Your Next 30 Days — The Deployment Sequence That Actually Works

Stop planning. Start sequencing.

Days 1–5

Identify your highest-volume, most-predictable call type. Pull 90 days of call recordings and CRM data associated with that call type. Quantify the current cost per interaction, average handle time, and resolution rate. This is your baseline — every future metric gets measured against it.

Days 6–15

Design your dialogue flow against the goal-oriented framework — not a decision tree, not a script. Define the agent objective for every conversation type, the entities it needs to extract, the CRM fields it needs to read and write, and the escalation criteria that trigger a human handoff.

Days 16–22

Run shadow mode. Your AI agent processes every call in parallel with your human agents, generating proposed responses without the caller hearing them. Compare AI resolution accuracy against human agent outcomes. Target 90%+ agreement before going live.

Days 23–28

Limited live deployment. Route 20% of target call volume to the AI agent with real-time human monitoring. Track containment rate, caller satisfaction, and any failure modes that did not appear in shadow testing.

Days 29–30

Full deployment for the target call type. Activate all monitoring layers. Set your 30-day optimization cadence. By day 30, your competitors leads are still waiting in a call queue. Yours got a personal, human-sounding call within three seconds — at midnight, on a holiday, in any of 20+ languages.

Q2 implementation slots are filling fast. Teams that start this month lock in current pricing before platform updates.

Your Customers Are Calling Right Now

The only question is who answers.

The voice AI market does not reward the companies that plan the longest. It rewards the ones that deploy the smartest — and then optimize relentlessly.

Join 10,000+ enterprise teams already transforming their customer experience