A Phoenix brokerage deployed an AI tool to auto-generate listing descriptions. Within 60 days, three listings violated HUD Fair Housing guidelines. The result: a formal complaint, five-figure legal bill, and irreparable reputation damage.

That story is not rare. It is becoming the norm. This is your compliance-first playbook to adopt AI without becoming the next cautionary tale.

Based on NAR 2025 Technology Survey

NIST AI RMF Aligned

What You Will Gain From This Playbook:

Proven compliance framework that protects your brokerage from regulatory action

Exclusive insights on NIST, HUD, and FHFA requirements most brokerages overlook

Breakthrough AI deployment strategy delivering 230% higher conversion rates

30-day governance blueprint you can implement immediately

Real estate professionals are adopting AI faster than any compliance framework can keep up with. According to NAR’s 2025 Technology Survey, the majority of Realtors now use or plan to use AI-powered tools for client service, lead management, and marketing. The enthusiasm is justified — AI delivers speed, scale, and precision that manual workflows simply cannot match.

But enthusiasm without governance is a lawsuit waiting to happen. This article is not another cheerful overview of AI potential. It is a compliance-first operating manual — built on federal frameworks, regulatory rulings, and real-world deployment data — that shows you exactly how to adopt real estate AI without exposing your brokerage to the risks already sinking your competitors.

The $4.2 Billion Opportunity Most Brokerages Are Fumbling

The real estate industry’s relationship with AI resembles a teenager with a sports car. Enormous power. Minimal training. Predictable outcomes.

NAR’s AI overview identifies three dominant use cases gaining traction: generative AI for content creation, predictive analytics for pricing and market analysis, and automation for lead management and client communication. Each one carries measurable upside — and each one carries a distinct compliance risk that most adoption guides conveniently ignore.

Proven Results: What Compliant AI Deployment Delivers

Did You Know?

Brokerages running AI voice agents report that leads answered within seconds convert at rates their manual teams never approached — because the conversation happens when buyer intent is highest, not when an agent checks their phone between showings.

But speed without compliance guardrails is a different kind of risk. And that risk has a federal price tag.

Join 10,000+ Real Estate Professionals Using Compliant AI

Hear what a compliant, enterprise-grade AI voice agent sounds like — request a live demo.

Get Your Live Demo — Receive a Call in Seconds

Limited availability this month — secure your spot now

Why the NIST Framework Matters More Than Your AI Vendor Sales Deck

Your AI vendor will tell you their product is enterprise-ready. Ask them if they have mapped their architecture to the NIST AI Risk Management Framework (AI RMF 1.0), and watch the confidence evaporate.

NIST’s framework is the closest thing the U.S. has to a universal standard for AI governance. It is organized around four core functions that translate into practical questions guiding every AI purchasing and deployment decision.

Brokerages with documented NIST alignment hold a defensible position when compliance questions arise.

The Four NIST Functions Every Brokerage Must Address

GOVERN

Who in your organization is accountable when the AI produces a discriminatory listing description? If the answer is nobody specific, you have a governance gap that regulators will exploit.

MAP

Have you identified every context in which your AI touches consumer data, makes a recommendation, or generates client-facing content? Most brokerages discover they have 3x more AI touchpoints than they realized.

MEASURE

How are you testing AI outputs for accuracy, bias, and compliance on an ongoing basis — not just at launch? The NIST AI Resource Center provides playbooks specifically designed for this continuous monitoring.

MANAGE

When something goes wrong — and it will — what is your incident response? Who pulls the plug, who documents the failure, and who communicates with affected clients?

Quick Tip: Your Legal Shield

This is not a bureaucratic exercise — it is your legal shield. Brokerages that can demonstrate NIST-aligned AI governance hold a defensible position when complaints arise. Those that cannot are operating on borrowed time.

The Fair Housing Minefield That AI Walks Into Blindly

An AI model trained on historical real estate data will absorb every bias embedded in that data. Redlining patterns. Demographic steering. Exclusionary language preferences. The model does not know these patterns are illegal. It just knows they correlate with outcomes in its training set.

This is why HUD’s fair housing framework matters more in the AI era than it ever has. The Fair Housing Act prohibits discrimination based on race, color, national origin, religion, sex, familial status, and disability — and that prohibition extends to every piece of content an AI generates on your behalf.

Every AI-generated output must be reviewed against HUD advertising guidance before publication.

Three Critical Risk Vectors You Must Address

AI-generated listing descriptions routinely produce phrases that carry discriminatory implications. Family-friendly neighborhood can imply familial status preference. Close to specific religious institutions can signal religious steering. Up-and-coming area has been flagged as a proxy for racial transition language. HUD’s advertising guidance identifies dozens of phrases that trigger fair housing scrutiny — and most AI writing tools have never been trained against this specific list.

Targeted digital advertising using AI audience selection is where the largest enforcement actions are concentrating. HUD’s guidance on digital platform advertising makes clear that algorithmic targeting carries the same liability as manual discrimination — the fact that a machine chose the audience does not absolve the advertiser.

Voice and communication AI presents a subtler challenge. When an AI agent handles an inbound inquiry about a property, its response patterns — which questions it asks, which properties it recommends, how it qualifies the caller — must be reviewed for steering risks. A platform built for regulated industries builds these compliance checks into the conversation architecture itself, not as an afterthought.

Did You Know?

The enforcement trend is unmistakable: regulators are shifting focus from individual agents to the systems and platforms those agents rely on. If your AI tool produces a discriminatory output and you deployed it without adequate review processes, the liability chain runs straight to your brokerage.

Automated Valuations Are Not Your Appraiser — And Regulators Are Drawing the Line

Property AI that estimates values is seductive. Pull up an address, get a number, skip the appraisal. Except the federal government has decided that these tools need the same rigor as the professionals they claim to supplement.

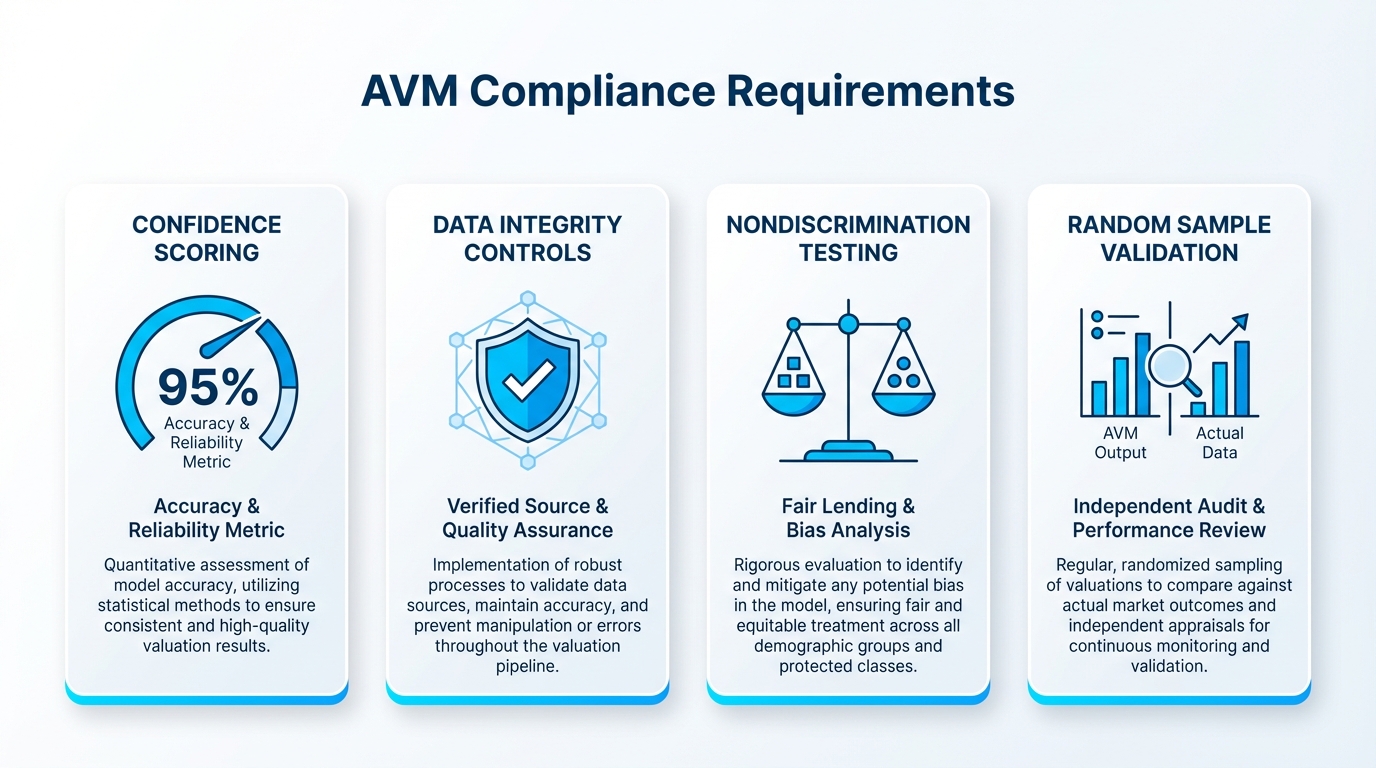

The FHFA’s Quality Control Standards for Automated Valuation Models — finalized in 2024 — impose specific requirements on any AI-driven property valuation used in mortgage-related transactions. The final rule mandates confidence scoring, data integrity controls, conflict-of-interest protections, random sample testing, and critically, compliance with nondiscrimination laws.

AVM Compliance Requirements at a Glance

Quick Tip: Documentation Is Your Defense

If you use AI-generated valuations in pricing discussions, CMA preparations, or buyer consultations, you need documentation proving those outputs meet federal standards. AI is a powerful input, not a final answer. Any brokerage treating AI valuations as authoritative without governance is building on a foundation regulators are actively inspecting.

AI-Generated Staging Photos Look Great Until They Trigger a Misrepresentation Claim

A listing agent in Denver used generative AI to virtually stage an empty living room. The AI added a fireplace that did not exist. The buyer’s agent noticed — after the offer was accepted. The transaction collapsed, and the listing agent’s E&O insurance carrier opened a review.

Virtual staging is one of the most popular real estate AI applications — and one of the least regulated. NAR’s guidance on generative AI staging is clear: AI-staged images must be disclosed as digitally altered, and they must not misrepresent the physical condition of the property. Adding furniture to an empty room is acceptable. Removing a crack in the ceiling, adding a window that does not exist, or altering the size of a room crosses into misrepresentation.

Your Non-Negotiable Disclosure Protocol

Every AI-staged photo needs a visible label — not buried in MLS remarks, but attached to the image itself

Every listing using AI-generated visuals needs an internal review before publication

Never alter physical property features — only add removable items like furniture and decor

The NAR Code of Ethics, Article 12, requires Realtors to present a true picture in advertising and representations. That standard applies equally to AI-generated content and human-created content. The tool you used does not change the obligation you carry.

The Safeguards Rule: Your AI Workflows Are Handling Data You Are Required to Protect

Most real estate professionals do not think of themselves as financial institutions. The FTC’s Safeguards Rule disagrees.

If your brokerage handles mortgage referrals, processes financial pre-qualification data, or manages transaction documents containing Social Security numbers, bank account details, or income verification — you fall under the Safeguards Rule’s requirements. And when you feed any of that data into an AI system, you have expanded your attack surface while maintaining the same legal obligation to protect it.

Did You Know?

The Safeguards Rule requires a designated qualified individual to oversee your information security program. It requires risk assessments of every system that touches customer data. An AI tool that ingests client financial data and stores it on servers you do not control is a Safeguards Rule compliance event that demands documented due diligence.

Three Questions to Ask Every AI Vendor

Where is client data stored? Demand specific answers about server locations and data residency.

Who has access? Understand the full chain of data access including subcontractors.

What happens when the engagement ends? Get written data deletion and retention policies.

Platforms operating at enterprise compliance standards — SOC 2 Type II, GDPR, HIPAA — have already built the security architecture the Safeguards Rule demands. If the answers are vague, your Safeguards Rule compliance is vague — and the FTC does not accept vague.

When Automation Becomes Malpractice: Five Decisions an AI Should Never Make Alone

NAR’s broker risk-reduction guidance says it plainly: generative AI outputs carry risks around accuracy, fair housing compliance, and privacy — and human responsibility cannot be delegated to a machine. Real estate automation excels at speed and scale. It fails at judgment, context, and fiduciary duty.

The Five Boundaries You Must Never Cross

1. Contract Interpretation and Legal Advice

An AI can populate a template. It cannot advise a client on whether an inspection contingency protects their interests. The moment an AI offers guidance interpreted as legal counsel, you have an unauthorized-practice-of-law problem.

2. Final Pricing Recommendations

AI-generated CMAs are excellent starting points. They are not substitutes for agent knowledge of specific neighborhood micro-dynamics. An agent who relies solely on AI pricing without local verification is abdicating professional duty.

3. Disclosure Decisions

What must be disclosed varies by state, municipality, and circumstance. AI trained on general data cannot reliably navigate your jurisdiction’s specific disclosure obligations. A missed disclosure driven by AI guidance is still your missed disclosure.

4. Client Qualification and Steering

When an AI qualifies a lead, the sequence of questions and recommendations must be reviewed for steering risk. An AI that consistently directs certain demographics toward certain neighborhoods creates fair housing violations in real time.

5. Negotiation Strategy and Concessions

Negotiation requires reading emotional dynamics and making judgment calls that balance client interest against transaction viability. AI can provide data to inform negotiation. It cannot conduct one on your client’s behalf without creating agency complications.

The Golden Rule of AI Deployment

Automate the inputs, own the outputs. AI handles the data gathering, scheduling, initial communication, and market analysis. You — the licensed professional — handle the decisions that carry fiduciary weight.

Limited Time Offer

Get Your Free Compliance Assessment

Our team will analyze your current AI stack and identify compliance gaps before regulators do.

Explore the NewVoices Platform

Only 15 assessment slots remaining this month

A Governance Blueprint That Takes 30 Days, Not 30 Months

Most brokerages stall on AI governance because they imagine it requires a dedicated compliance department and a six-figure consulting engagement. It does not. It requires four documented commitments and the discipline to enforce them.

Your Four Non-Negotiable Commitments

Define Your AI Inventory

List every AI tool your agents use — including the ones they adopted without asking permission. CRM plugins, ChatGPT for descriptions, AI staging apps, lead scoring algorithms, voice agents.

A mid-size Austin brokerage completed this exercise and discovered 14 distinct AI tools across 22 agents — only three had been formally approved.

Establish Review Workflows

No AI-generated listing description publishes without human review against HUD fair housing requirements. No AI-staged photo posts without disclosure verification. No AI voice agent deploys without conversation script review. The review does not need to be slow — it needs to exist.

Choose Vendors Who Share Your Compliance Burden

Your AI platform should arrive with SOC 2 Type II certification, documented data handling policies, and willingness to map capabilities to frameworks like NIST AI RMF. Enterprise-grade platforms let you design AI conversations with compliance guardrails built into the workflow — not appended as a disclaimer.

Train Quarterly, Not Annually

AI capabilities evolve monthly. Regulatory guidance evolves with them. A brokerage that trained agents in January and has not revisited by June is operating on outdated protocols. Thirty-minute quarterly workshops cost almost nothing and prevent almost everything.

The Brokerages That Win the Next Five Years Will Be the Ones That Governed AI Before They Were Forced To

This is not a technology article. It is a liability article.

Every AI capability discussed here — instant lead response, automated valuations, generative listing content, AI-powered client communication, virtual staging — delivers measurable ROI when deployed inside a compliance framework. The same capabilities deployed without governance deliver measurable legal exposure, regulatory scrutiny, and reputational damage.

The data is directionally clear. Brokerages using AI voice agents with proper compliance architecture are converting leads at rates their competitors cannot touch — because they answer in seconds while traditional teams take hours, and they do it at 2 AM on a Saturday with the same professionalism as 10 AM on a Tuesday.

The Regulatory Trendline Is Accelerating

FHFA’s AVM rule was the opening move. FTC enforcement on data security is expanding. HUD’s digital advertising guidance is tightening. State-level AI disclosure requirements are multiplying. The cost of retroactive compliance — remapping your AI workflows after an enforcement action — is 10x the cost of building governance into your deployment from day one.

This is not a chatbot with a real estate license. It is an operational architecture that either protects your brokerage or exposes it — depending entirely on whether you built the governance layer before you turned the system on.

The playbook is in front of you. Inventory your AI tools. Establish human review workflows. Align to federal frameworks. Choose vendors who share your compliance obligations. Train your team continuously.

Ready to Deploy AI That Protects Your Brokerage?

Hear what a compliant, enterprise-grade AI voice agent sounds like when it answers a property inquiry at midnight.

Request Your Live Demo — Get a Call in Seconds

The difference between AI that grows your business and AI that jeopardizes it is not the technology. It is the framework you wrap around it.

10,000+

Professionals Trust NewVoices

230%

Higher Lead Conversion

24/7

Compliant Coverage

SOC 2

Type II Certified